High-resolution image synthesis with latent diffusion models has emerged as a groundbreaking technique in artificial intelligence, particularly in 2026. This technology combines diffusion models and latent space representation to produce high-quality images. The process involves using a compressed representation of images to iteratively refine noise until a coherent image emerges.

The significance of this technology lies in its ability to generate photorealistic images with unprecedented fidelity, opening new avenues in fields such as entertainment, design, and scientific visualization. This article explores the intricacies of high-resolution image synthesis with latent diffusion models, examining its underlying mechanics, applications, and challenges.

Understanding Latent Diffusion Models

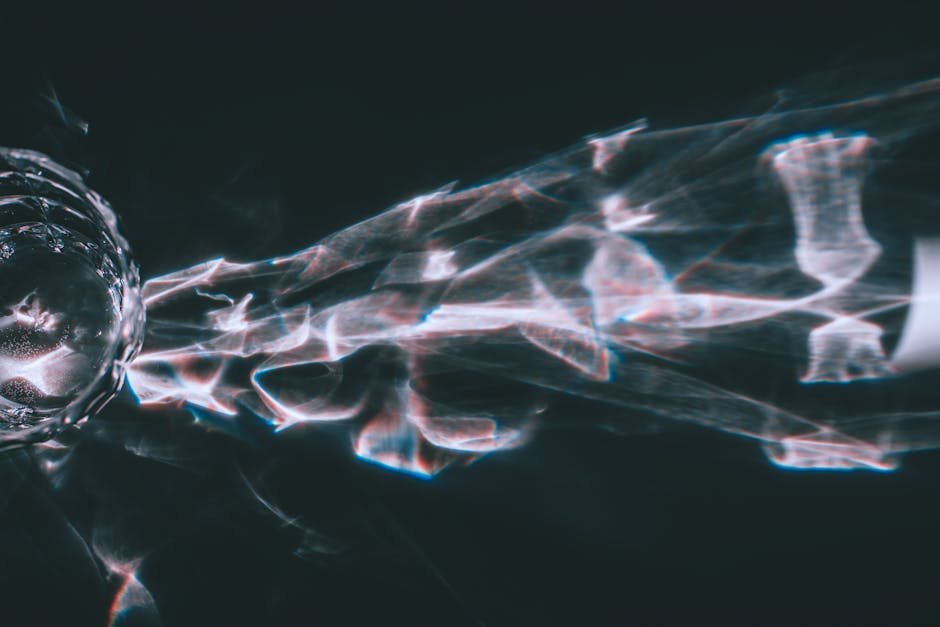

Latent diffusion models transform a random noise signal into a coherent image through a series of denoising steps within a compressed latent space. This approach reduces computational resources compared to traditional diffusion models that operate directly on pixel space. The latent space representation is achieved through a Variational Autoencoder (VAE).

The image synthesis process begins with sampling a random noise vector from the latent space, followed by refinement steps guided by a learned denoising process. This denoising process is conditioned on the latent representation, allowing the model to generate diverse and high-quality images.

Key Components and Their Functions in High-Resolution Image Synthesis with Latent Diffusion Models

- Variational Autoencoder (VAE): Encodes images into a latent representation and decodes latent vectors back into image space.

- Denoising Model: A neural network trained to remove noise from a latent vector.

- Latent Space: A compressed representation of images where the diffusion process occurs.

- Diffusion Process: A Markov chain that transforms noise into a coherent image.

- Conditioning Mechanism: Allows the model to generate images based on specific inputs.

Comparing Latent Diffusion Models to Traditional Methods

| Method | Image Quality | Computational Cost | Flexibility |

|---|---|---|---|

| Latent Diffusion Models | High | Moderate | High |

| GANs | High | High | Moderate |

| Traditional Diffusion Models | High | Very High | High |

| VAE-based Methods | Moderate | Low | Moderate |

Latent diffusion models balance image quality, computational cost, and flexibility, making them suitable for high-resolution image synthesis tasks. They offer advantages over GANs and traditional diffusion models in terms of efficiency and stability.

Practical Applications and Challenges

High-resolution image synthesis with latent diffusion models has vast applications, from artistic creation to data augmentation for machine learning models. However, challenges such as mode collapse and the need for large datasets remain.

Researchers are exploring techniques to mitigate these issues, including improved training methodologies and architectural innovations. Developing more efficient and robust latent diffusion models is crucial for their widespread adoption.

Conclusion

High-resolution image synthesis with latent diffusion models represents a significant advancement in AI-driven image generation. Understanding its underlying mechanics and applications can help developers harness its potential. As this field evolves, we can expect more sophisticated tools for image synthesis to emerge.

FAQs

What are the primary advantages of using latent diffusion models for image synthesis?

Latent diffusion models offer a balance between high image quality and computational efficiency. They are suitable for various applications.

How do latent diffusion models compare to GANs in terms of image quality?

Latent diffusion models can achieve comparable or superior image quality to GANs. They are also generally more stable during training.

What are some challenges associated with training latent diffusion models?

Training requires large datasets and significant computational resources. Challenges include mode collapse and ensuring diverse output.